My GitHub Copilot Journey - Part 1: The First Ask

Overview

I spent 20 minutes googling an error message. Then I asked an AI, got the answer in 8 seconds, and spent the next 10 minutes wondering why I hadn't done that sooner.

To me, this is a story about trust. Not the blind kind — the calibrated kind. The kind you build one correct answer at a time, and that honestly takes a bit of patience (but it's worth it, I promise 😊).

The search-engine reflex

We've all trained ourselves to do the same thing when we're stuck: open a browser, type a question, scan ten blue links, open three tabs, compare answers, close two of them, try the remaining one, and when that doesn't work, start over. (I won't go into how many hours of my life have disappeared into this loop, but let's just say it's a lot.)

It works. It's slow, but it works. And because it's worked for 20 years, it's become a reflex. Not a decision — a reflex.

My AI journey started the moment I interrupted that reflex. I have been googling things for so long that it felt almost unnatural to try something different.

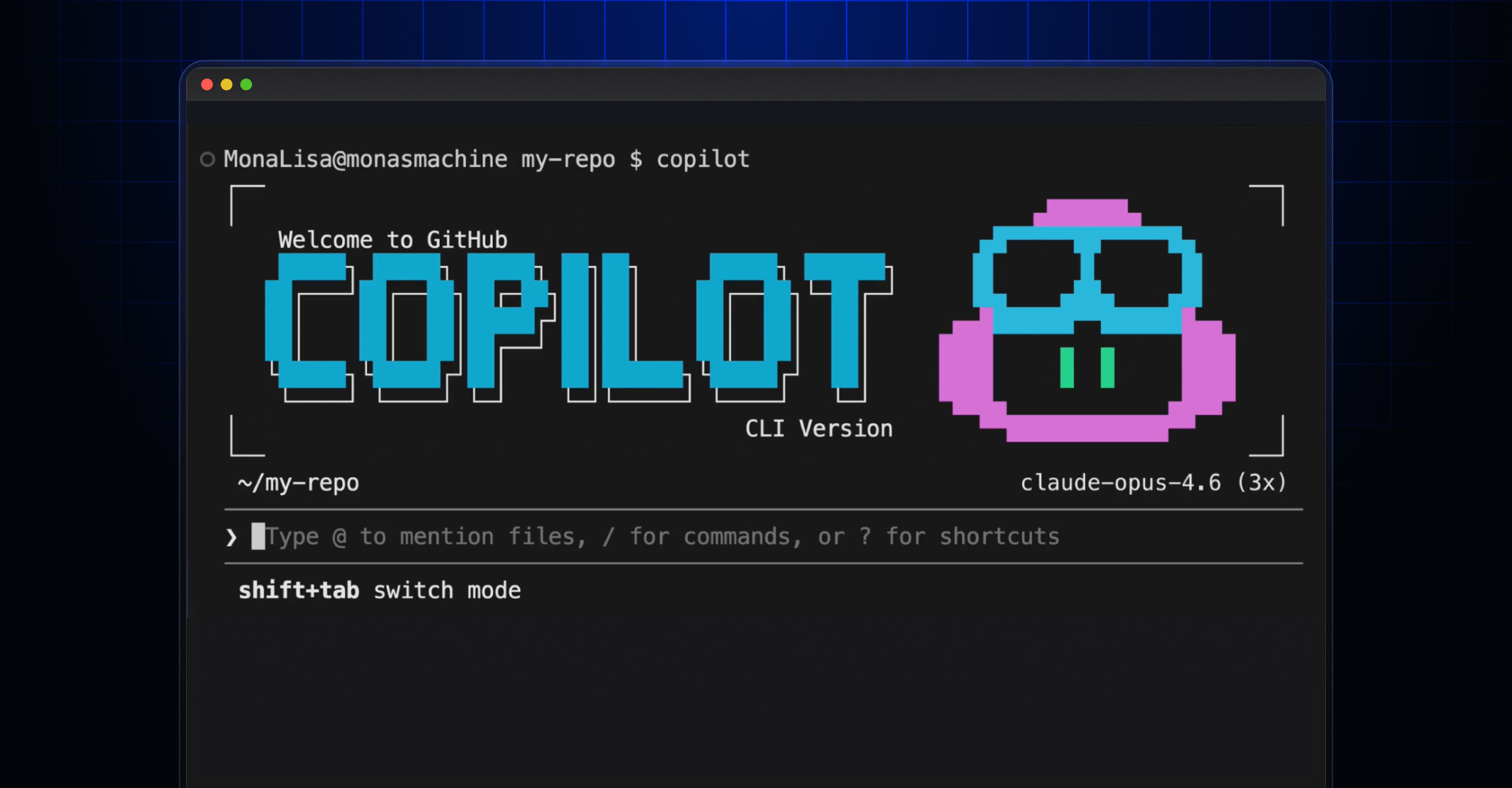

I had an error I couldn't figure out. Some command-line tool was failing with a message that felt deliberately cryptic (you know the type — the ones that seem designed to make you feel inadequate). Instead of opening Chrome, I opened GitHub Copilot in my terminal and typed: "This command returns an error, can you figure out which tool I need?"

It answered. Correctly. In one turn.

No ads. No "this thread is from 2019." No cookie banners. Just the answer. I must say, that felt pretty great 😊.

What "starting small" actually looks like

In my first week, I averaged about five sessions, each between 3 and 6 exchanges. Every single one followed the same pattern (nothing fancy, I'm not going to pretend there was a grand strategy here):

- I hit a problem

- I almost opened a browser

- I caught myself and typed the question instead

- I got an answer

- I verified it (every time, without exception)

That's it. No grand vision. No "let me build an app with AI." Just: ask instead of search. For me, as someone who has been building things on the web for years, this felt like a surprisingly humble beginning.

The things I asked about were mundane: error messages, API syntax, configuration flags, "what does this parameter do?" The kind of questions that have definitive, verifiable answers. My very first infrastructure question? "What is the correct name for this cloud region?" — a fact I could verify in seconds.

And that's the point. You calibrate trust on questions where you can check the answer. You don't start by asking for architectural advice. You start by asking for facts you can verify in 10 seconds.

Pro Tip: Start with questions that have a single, objectively correct answer — error messages, API syntax, region names. If you can verify it in under 30 seconds, it's the perfect calibration question.

You might be in this stage if:

- You've tried AI a few times but keep going back to Google out of habit

- You verify every single answer before using it

- Your sessions are short — 5 turns or fewer

- You mostly ask about errors, syntax, or "how do I do X?"

The trust calibration loop

Here's what I didn't expect: the biggest barrier wasn't Copilot's capability. It was my own suspicion. (I'm not going to lie — I was quite skeptical at first, maybe even a bit stubborn about it.)

Every answer triggered the same internal response: Is this right? Should I double-check? What if it's confidently wrong?

That suspicion is healthy. But it also slows you down if you don't manage it. I won't go into the psychology behind this in too much detail, but here's how the calibration actually works in practice:

Week 1: Ask a question. Verify by googling. AI was right. Repeat 20 times. Start to notice: it's been right about 85-90% of the time on factual questions.

Week 2: Ask a question. Verify, but faster — you're now spot-checking rather than deep-verifying. You know the types of questions where it's reliable (factual, well-documented topics) and where it needs a second opinion (niche, recent, or opinion-based topics).

Week 3: Ask a question. Use the answer. Verify only when something feels off. The reflex has shifted from "search then verify" to "ask, use, and trust-but-verify."

This is not blind trust. It's evidence-based trust, built on dozens of micro-experiments where you tested Copilot and tracked the results. The beauty of this approach is that it's completely low-risk — you're never betting on something you can't verify yourself.

The moment the habit clicks

The shift happened somewhere around session 15. I was working on something, hit a snag, and reached for the terminal without thinking. Not as a deliberate experiment. As a reflex.

I realized that was the inflection point. Not when AI gives you a great answer — but when asking AI becomes your default action instead of your backup plan.

In behavioral terms: the activation energy for "ask AI" dropped below the activation energy for "open browser." From that point, every search-engine query felt like unnecessary friction. The downside is that you start feeling slightly impatient with the old way of doing things (which, to be fair, is not entirely rational 😊).

What I was not doing (yet)

It's worth being honest about what this stage isn't. In my first two weeks (and I want to be transparent here, the goal is not to make this sound more impressive than it was):

- I never asked Copilot to build something

- I never had a session longer than 10 exchanges

- I never asked for an opinion or a strategy

- I never used it for anything outside of code

And that's fine. The point of this stage isn't productivity gains. The point is rewiring the reflex. The gains come later — much bigger than you'd expect — but only if you build this foundation first. If you ask me, this initial phase is actually the most important one in the entire journey.

Try this week

Here's a concrete challenge. No vague "try using AI more" advice — something measurable:

Replace five Google searches with AI asks this week. Pick questions with verifiable answers (error messages, syntax, config). After each one, score it:

- ✅ Correct and faster than searching

- ⚠️ Correct but you needed to verify

- ❌ Wrong or misleading

At the end of the week, tally your scores. If you got 3+ green checks, you've started building calibrated trust. You're ready for Part 2. Why not start today? (if you haven't already that is 😊)

In Part 2 , I'll talk about what happens when you stop asking quick questions and start having real conversations — 20, 30, even 50 turns deep — and how that changes what you're able to build.

This is Part 1 of the My GitHub Copilot Journey series.

Now go ask GitHub Copilot something ambitious. 🚀

If you need info, or just want to share your own experience, please do not hesitate to contact me!

Kr, Tim